Source

You can find the full example repository on Github: https://github.com/gmoigneu/upsun-embeddings-watchesArchitecture

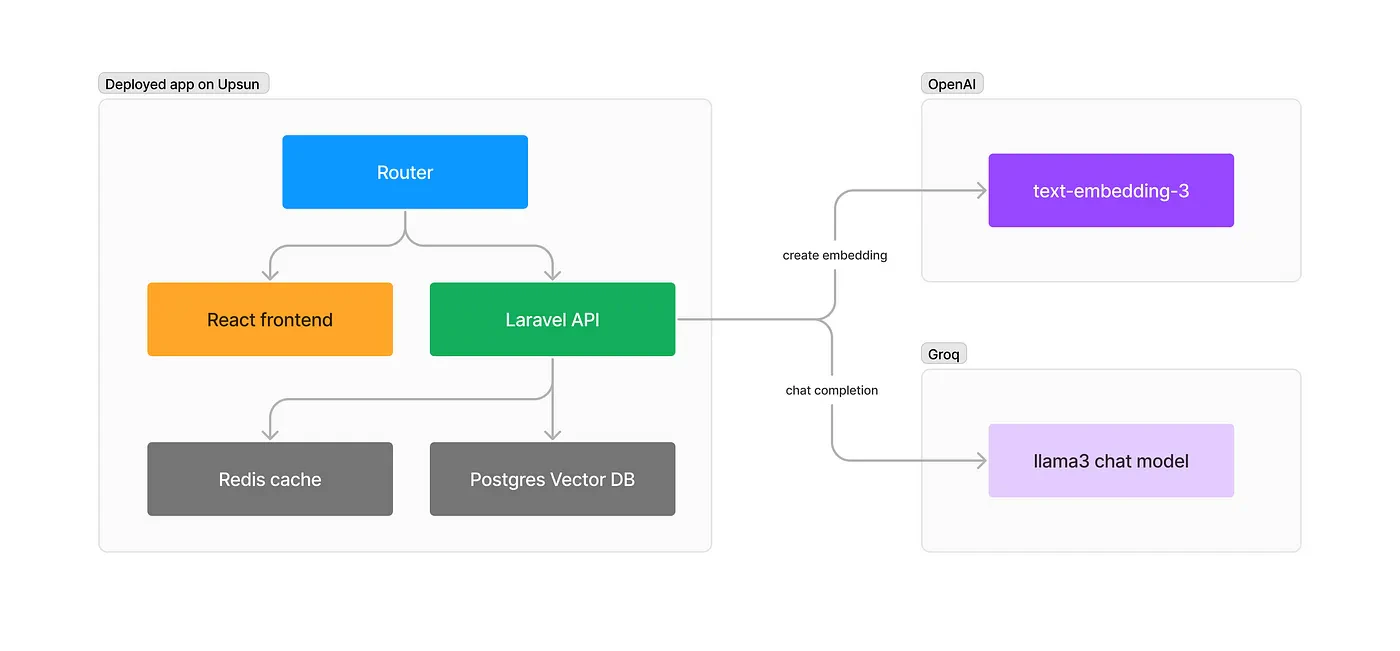

Let’s review the architecture we are going to create and deploy:

- A static React frontend is used to present the UI to the user.

- An API built with Laravel 11 will handle the search requests and populate our database.

- A Postgres instance to store our watches records and vectors for the embeddings of our content.

llama3–8b-8192 as a model on Groq, but mixtral or any other would also work.

Bootstrapping our apps

You will needphp8.x and composer installed locally for this to work. As we deploy our app on Upsun, you can grab the upsun cli by following the steps here.

First, create a new folder for your project:

react-ts template but feel free to use any other variant. We will set up shacdn/ui for the UI to speed up building our interface.

tsconfig.json file to resolve paths:

vite.config.ts so your app can resolve paths without error

shacdn init with the following settings:

Setting up our local development environment & infrastructure

While you could use Laravel Sail or any other Docker-based local setup to develop the project, I find relying on the Upsun tethering feature always way quicker. It maps the remote services (database, Redis, etc.) to local ports, so you only need to run the runtimes (PHP, JS, …) locally. To do that, we first need to go through the Upsun configuration. While you can use theupsun ify CLI command to generate an automatic configuration, here is the complete configuration explained. Create a new .upsun/config.yaml and paste the following:

watches/.environment file with the following:

Our first deploy

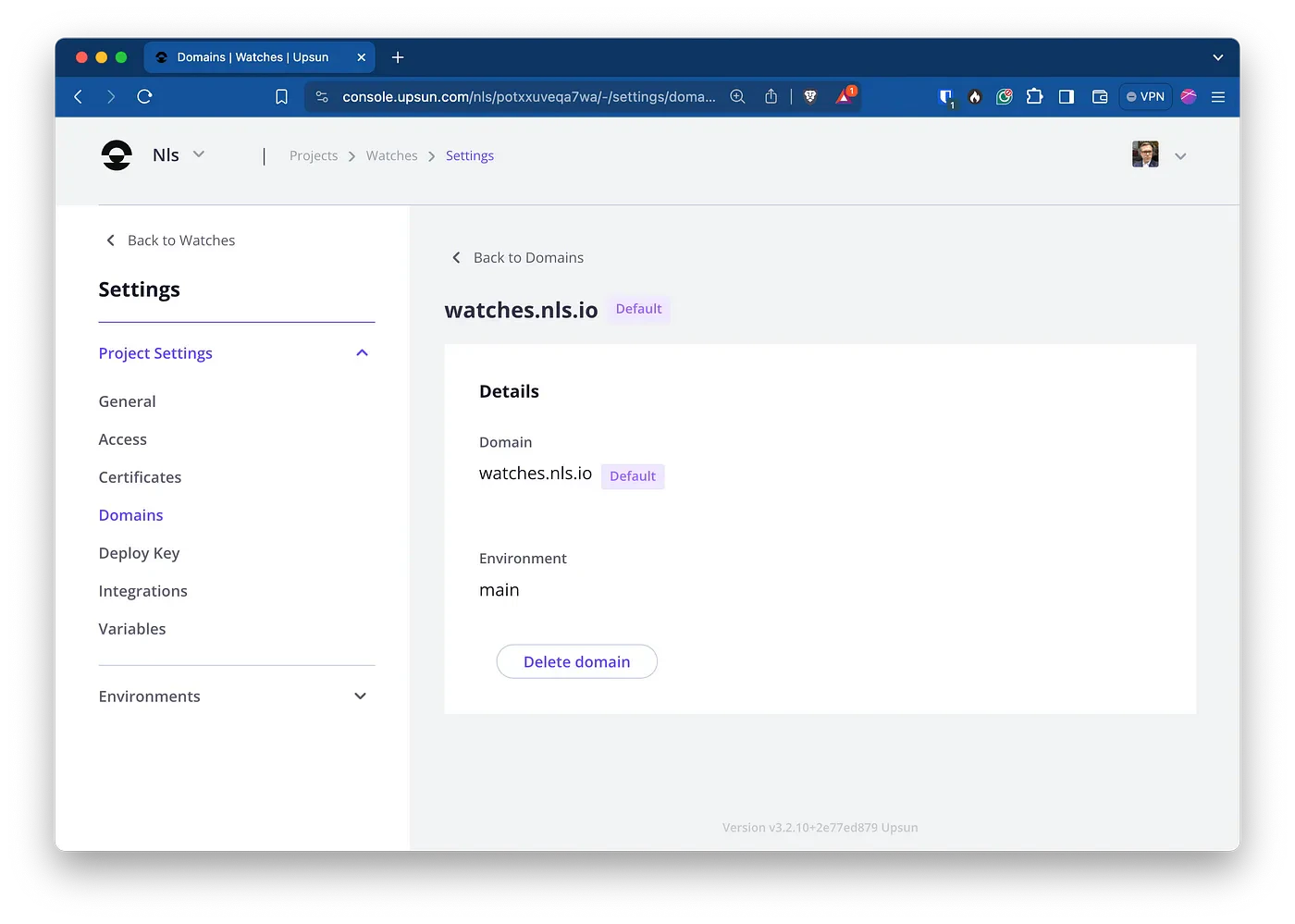

Let’s create an Upsun project for our application:https://frontend.<branch>.<project>.<region>.platformsh.site

https://app.<branch>.<project>.<region>.platformsh.site

You should be directed to the default pages for both the React and Laravel applications.

We can now connect to our remote database and cache services:

watches/.env to use these services:

phpredis extension loaded. Please refer to the following documentation on the Laravel site.

If you want to use test hostnames for your applications, edit /etc/hosts or your Windows host file with the following or any other names you like:

127.0.0.1 watches.test frontend.watches.test api.watches.test

If so, update the .env file with the proper hostname:

-- for the argument to be able to run. Your local applications are now available at http://frontend.watches.test:5137 and http://api.watches.test.

Getting some data about watches

As we will recommend a specific watch to our user, we must first have a database containing the potential results. Fortunately, Kaggle has a Luxury Watch Dataset (no longer available. Check https://www.kaggle.com/kashnitsky/datasets/ for other public data sets) available for download. Create an account if needed and download the CSV file.

Configuring our external services

For us to use OpenAI and Groq APIs, we need to inject our credentials. Create new API keys from their respective websites. In Laravel, we will add the respective arrays in the services configuration:.env file:

Let’s import our watches into the database

We will need a few things first. Create a Watch model. You can create it usingphp artisan make:model Watch --m or by adding the files manually:

Vector custom type to store and cast the embedding column. Create the migration file for the model:

embedding columns will use the vector type from the pg_vector package.

Migrate the database:

Vectorize job is more complex. It will:

- Parse the CSV and create the watches in the database

- Combine all attributes of each watch into a text string that we will send to OpenAI to get an embedding

- Store that embedding in our database as a vector

Create the API controller to get the search request

Let’s create the API controller for our frontend to query the database. The controller will take care of:- Parsing and validating the query value sent through the POST request

- Creating an embedding of the query

- Query the database using

nearestNeighborsto find the most relevant watch - Send a streamed request to the Grok Chat API to create a proper answer with the details of the watch and the reason why it is the best.

SearchWatchRequest validator. Remember to return true in the authorize() method, or you will get a 403 when POSTing to it.

Wrap up the Laravel configuration

Two tasks need to be done before our Laravel is fully operational. First, create a new route to handle the request:cors.php and review the settings:

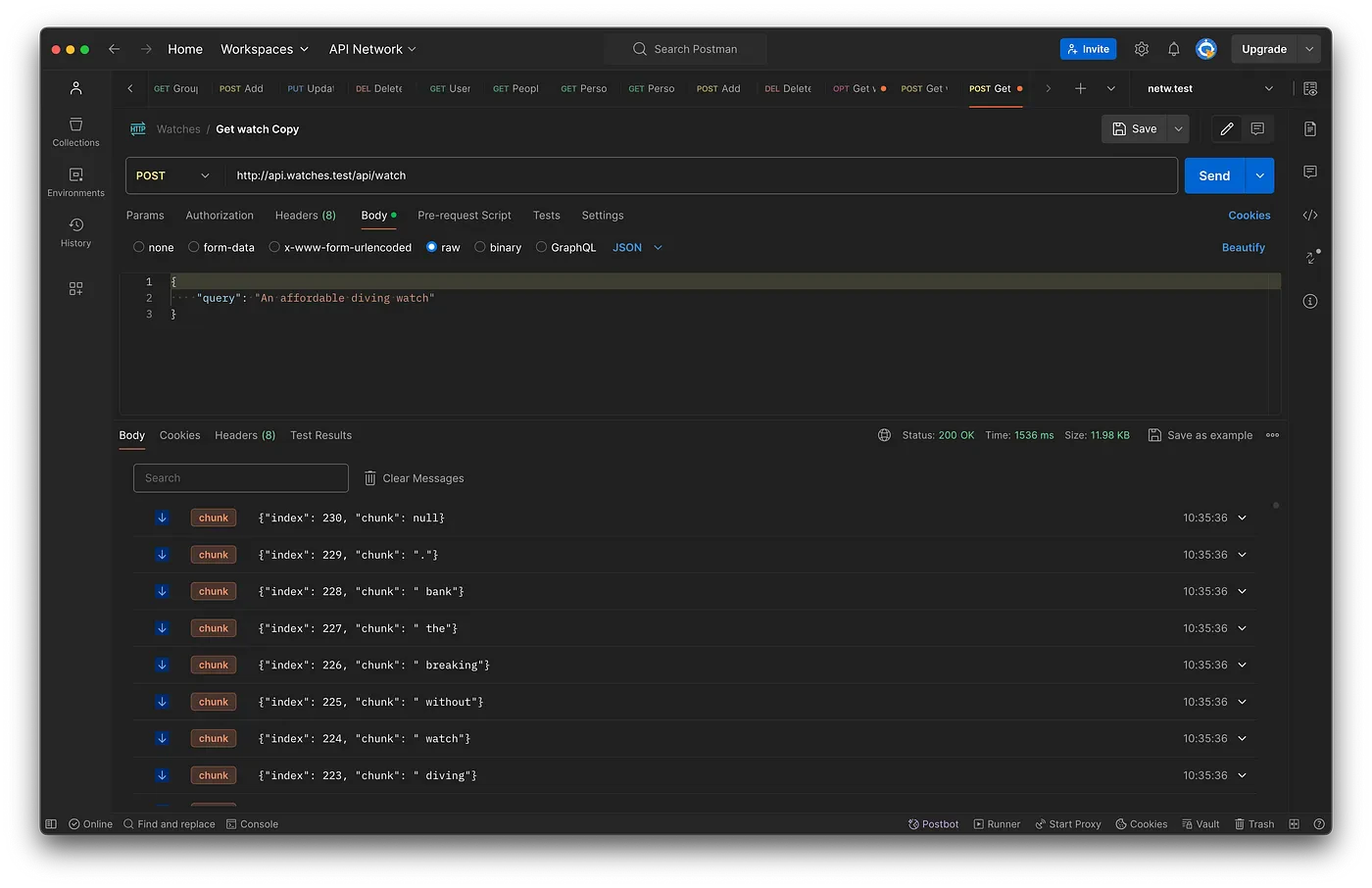

Let’s test our API!

You can now usecurl or a tool like Postman to query the API and get your result. As you can see, the result is streamed in chunks by our Laravel API. Congratulations!

Let’s deploy it!

Add the vectorize job in.upsun/config.yaml so it is done every time in the background after you deploy the app without impacting the app availability. You might want to trigger this manually if you have more data to avoid increasing your OpenAI bill too much.

Time to build our frontend

Let’s switch to our frontend React app now.shacdn/ui components:

src/components/ui.

We will use two different libraries to query our APIs:

axioswould be used to query other potential endpoints of our Laravel API@microsoft/fetch-event-sourceto stream the result from our API

getApiURL will use our local development values if the hostname is watches.test. If not, it will replace the frontend string with api on any other hostname. This will allow us to make it functional in any preview environment on Upsun as well as production. You can always change the logic there to map your own deployment needs.

The main App.tsx

It’s time to build that frontend application layout and logic:- The JSX is rendered on load. We use a

<Markdown/>field to render the answer. - The user writes a prompt in the main

<TextArea/>that is store in a variablepromptusinguseState. - When clicking the

<Button/>, the script triggerssubmitForm()which creates a newStreamResponse newStreamResponsestarts by setting the state variablestreamingas true so we can use it to freeze our UI while the request loads and reset the answer as well as creating a local variable namedmessageContentto store the stream content coming back from the API.- It then uses

fetchEventSourceto query our Laravel API. TheopenWhenHiddenoption specifies if the stream should be listened to when the tab or window is not in focus. We pass our prompt as the body of this POST request. - The

onmessagemethod defines how we treat incoming data from the Event stream. Here we concatenate the new chunk to ourmessageContentand then update the state variableanswerto show the result. In case your content is too long or the chunks are coming in too fast, you might want to delay the update to avoid too many renderings. - When the stream ends, we reset the

streamingvariable tofalse.

Final deploy

Once these files added, let’s deploy:The result

You can now use the frontend URL that Upsun gave you in the deploy log to access your app. Let’s query it!